AI PCs are a sleeper asset class hiding in plain sight

Every AI PC sold this year contains a neural processing unit that nobody is billing for. That is either waste — or opportunity. While the investment world fixates on data center capex, GPU allocations, and cloud hyperscaler revenue, a parallel hardware buildout is happening at the device level — quietly, at scale, and almost entirely unpriced by the market.

This is the most interesting asymmetry in tech investing right now.

The consensus is wrong — and expensively so

The dominant narrative around AI investment runs something like this: AI is a cloud story. Compute centralizes. The winners are whoever owns the infrastructure. Buy the shovels.

It is not a stupid thesis. It has made people money. But it is increasingly incomplete — and incomplete theses have a way of becoming expensive ones at the point of revision.

Here is what the consensus is missing: the client device is not dying. It is being upgraded into an AI inference terminal. And the silicon enabling that upgrade is already shipping, at scale, into hundreds of millions of PCs worldwide — without the market having meaningfully priced what that means.

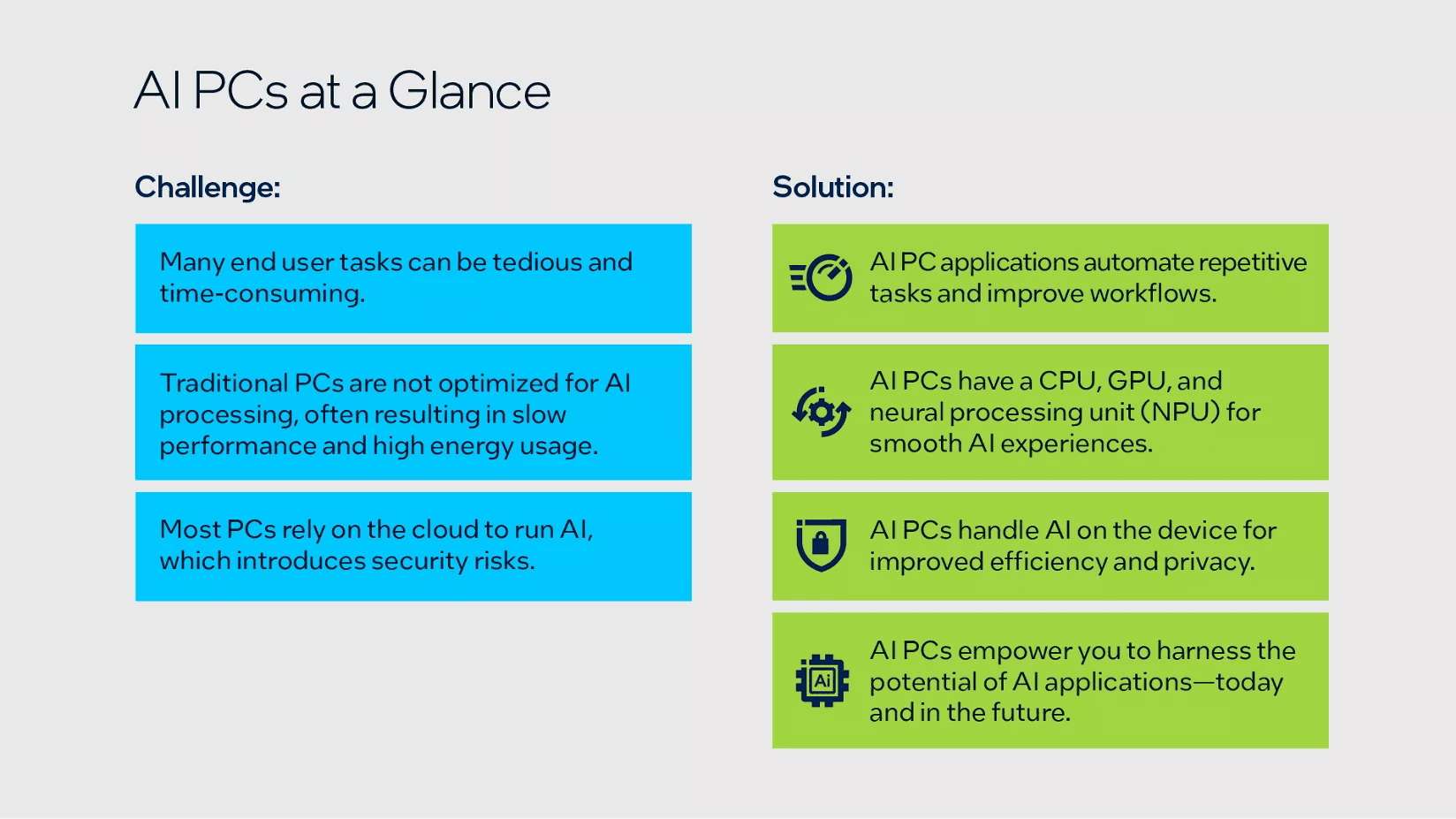

What an AI PC actually is — strip out the marketing

Before making the investment case, it is worth being precise about what we mean. "AI PC" has become a marketing term loose enough to cover almost anything. That looseness is part of why the opportunity is being missed.

A genuine AI PC contains a dedicated Neural Processing Unit — an NPU — designed specifically for low-power, continuous inference workloads. Not a GPU repurposed for AI tasks. Not a CPU with a few extra instruction sets. A purpose-built inference chip capable of 10 to 45+ TOPS (trillion operations per second), running persistently in the background at a fraction of the power draw of a GPU.

Intel's Lunar Lake, AMD's Ryzen AI series, and Qualcomm's Snapdragon X Elite all ship with NPUs of this class. These are no longer premium add-ons. They are becoming table stakes in the PC refresh cycle.

The utilization gap is enormous

Here is the key fact: almost none of this silicon is being used.

The hardware is shipping. The software ecosystem to route workloads to the NPU is embryonic. Most applications — even AI-enabled ones — are not yet written to take advantage of local inference capacity. The result is a growing installed base of inference-capable silicon sitting largely idle on every desk and lap.

This has happened before. GPU silicon was shipping years before deep learning frameworks matured enough to exploit it. The companies that positioned into that gap — in silicon, in tooling, in software — generated some of the defining returns of the last decade. The pattern is repeating.

Why agentic AI is the unlock

The utilization gap will not close on its own. It needs a catalyst. That catalyst is agentic AI — and it is arriving now.

Desktop agents, tools designed to autonomously perform complex tasks using the data on your local machine, have a fundamental problem with pure cloud architectures. They require persistent, low-latency awareness of your files, emails, calendar, and applications. Routing every micro-inference through a cloud API introduces latency, cost, and privacy exposure that makes the experience noticeably worse.

NPUs solve this. Local pre-processing — file indexing, context compression, intent classification, screen analysis — eliminates an enormous category of cloud round-trips that are high-frequency but low-value. The result is a hybrid architecture: local silicon handles orchestration and pre-processing; cloud LLMs handle the heavy reasoning. This is not a theoretical model. It is already being implemented in production desktop agent tools.

The economics are structural, not marginal

Some will argue that cloud inference costs will fall fast enough to make this irrelevant. This misunderstands the nature of the advantage.

The case for local compute is not primarily about cost per token. It is about latency, privacy, and the always-on requirement of persistent agents. A desktop agent that monitors your inbox, tracks your calendar, and maintains a live knowledge graph of your organization cannot afford the round-trip overhead of a cloud call for every micro-task. No amount of price reduction changes that architectural constraint.

Toss in a second structural factor — power grid capacity. Data center electricity demand is growing faster than grid buildout in several major markets. On-device inference is not just cheaper at the margin. It may become a structural necessity as a demand relief valve for strained grids. The hybrid compute model shifts from cost optimization to essential infrastructure.

Where the value actually accrues

The investment framework here has four layers, and they are not equally priced.

The silicon layer — NPU designers and manufacturers — is partially priced in for the largest players. It is the layer most investors have found already.

The OS and runtime layer — whoever controls the software that routes workloads to the NPU — is a nascent platform battle that is almost entirely unpriced. This is a critical chokepoint, and the winner does not yet exist in an obvious form.

The agentic software layer — desktop agent tools built on the hybrid local-cloud architecture — is early but visible. These tools have the most direct route to demonstrating measurable productivity value, which drives enterprise adoption and switching costs.

The enterprise IT layer sits at the top. Organizations deploying these tools at scale bring procurement requirements, security mandates, and compliance standards that create durable lock-in for whoever wins early.

The most asymmetric opportunity is almost certainly layers two and three — least priced, highest optionality, earliest in the adoption curve.

The bear case is a timing risk, not a structural risk

The honest objection to this thesis is not "it will not happen." It is "the software fragmentation problem could delay it by years."

That is a real risk. NPU ecosystems are fragmented today across vendors, operating systems, and runtimes. Developers have little incentive to optimize for a hardware target that represents a fraction of the active base. The flywheel is slow to start.

But this is a timing risk, not a structural one. The GPU ecosystem was similarly fragmented in 2010. It resolved — not through any single breakthrough, but through the accumulation of tooling, framework support, and developer familiarity. The question for an investor is not whether the NPU ecosystem scales. It is whether your time horizon is long enough to sit through the resolution.

What a contrarian does with this

The market is pricing AI as a data center story. The client device layer is being treated as a commodity input. That gap between narrative and economic reality is exactly where contrarian returns are built.

The catalyst that closes it will be visible in advance — look for the moment a major agentic product demonstrates measurably better performance through hybrid local-cloud compute. When that narrative shift arrives, it will move fast.

Until then, the most valuable compute in 2027 might already be sitting on every desk in your portfolio companies. Nobody is billing for it yet.

Want exposure to this shift — without building the portfolio yourself?

If this thesis resonates, the logical next question is execution. Identifying the opportunity is the easy part. Constructing and maintaining a portfolio that captures it across the full value chain — silicon, software, cloud infrastructure, enterprise IT — is significantly harder.

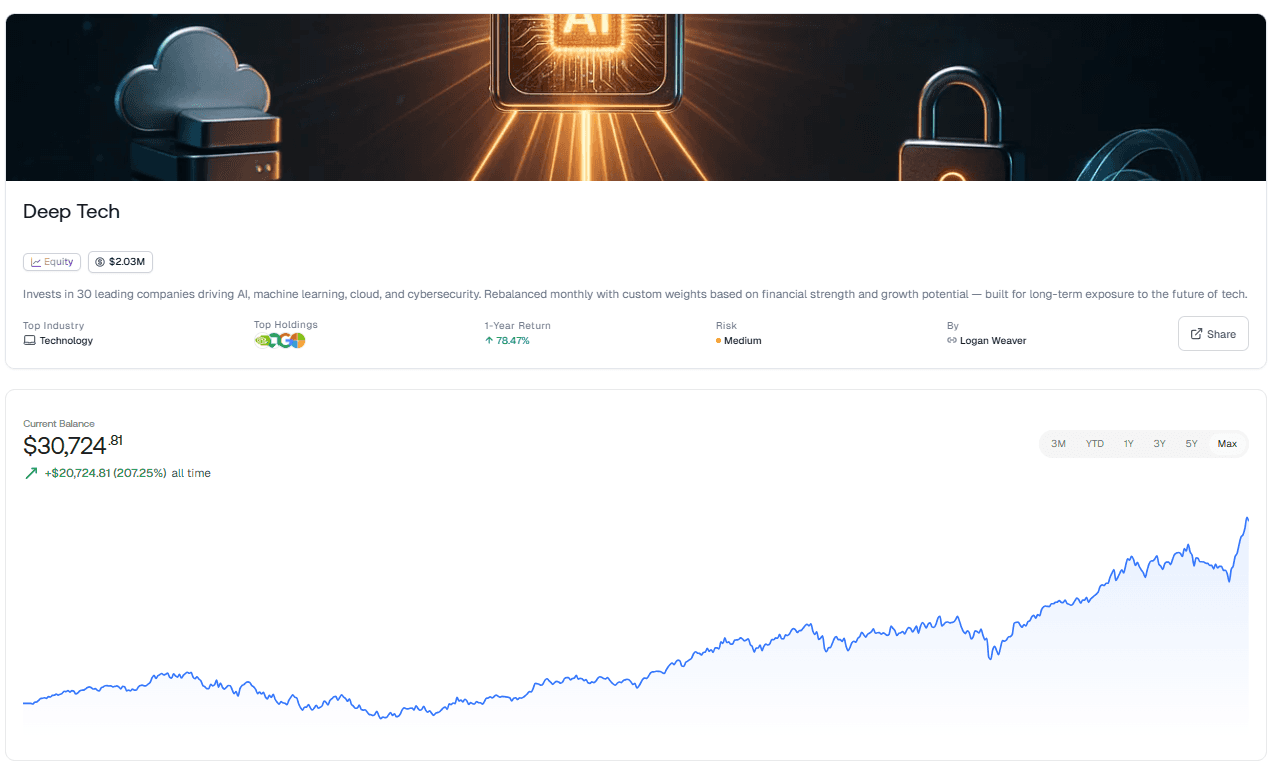

That is exactly what the Deep Tech Innovators Thematic Investing Strategy on Surmount is built to do.

What it is

This is a long-term, automated thematic strategy focused on the companies driving the deep technology revolution: AI, machine learning, cloud computing, cybersecurity, and the semiconductor and software infrastructure that underpins all of it. It holds a concentrated portfolio of 30 leading public companies with established track records in deep tech — or with significant capital deployed into the field.

The strategy does not just buy a broad tech index and call it a day. Each company's allocation is determined by custom weights derived from financial strength, peer comparison, and assessed upside potential. The portfolio rebalances monthly, automatically adjusting to keep allocations aligned with the strategy's conviction framework as the landscape evolves.

Why it fits this thesis precisely

The AI PC opportunity described in this post does not live in a single stock. It lives across a value chain — NPU silicon manufacturers, OS and runtime platform plays, agentic software builders, and enterprise IT infrastructure providers. Many of those names are exactly the kind of deep tech companies this strategy was designed to hold.

More importantly, this is a long-duration thesis. The NPU utilization flywheel is early. The software ecosystem is embryonic. The payoff is measured in years, not quarters. A monthly-rebalanced, long-term strategy is structurally the right vehicle for this kind of exposure — it keeps you in the trade through the noise while systematically adjusting as the landscape shifts.

Automated, systematic, and built for the long game

The strategy runs on a daily interval with monthly rebalancing — meaning it is always current, never stale, and never reliant on you remembering to act. The allocation adjustments are systematic, not emotional. In a market where the AI narrative shifts weekly, that discipline is an edge in itself.

For investors who want diversified, professionally constructed exposure to the companies building the future of deep technology — and who want to do it without spending hours managing a portfolio — this is a compelling starting point.

Explore the Deep Tech Innovators Thematic Investing Strategy on Surmount →

NEVER MISS A THING!

Subscribe and get freshly baked articles. Join the community!

Join the newsletter to receive the latest updates in your inbox.