Custom AI Chips Aren’t Killing Nvidia. They’re Strengthening It

We’ve all heard the bears who said that the hyperscalers are coming for Nvidia’s lunch. Of course, that does seem like the likely conclusion at a surface level. After all, the company’s biggest customers—Google, AWS, Microsoft, and Meta—are pouring billions into their own internal silicon. They are standing up TPUs, Trainium, Maia, and MTIA chips with the explicit goal of reducing their reliance on the Green Giant.

The narrative is as clean as it is compelling: "Why pay Nvidia exorbitant margins when you can print your own chips for a fraction of the cost?"

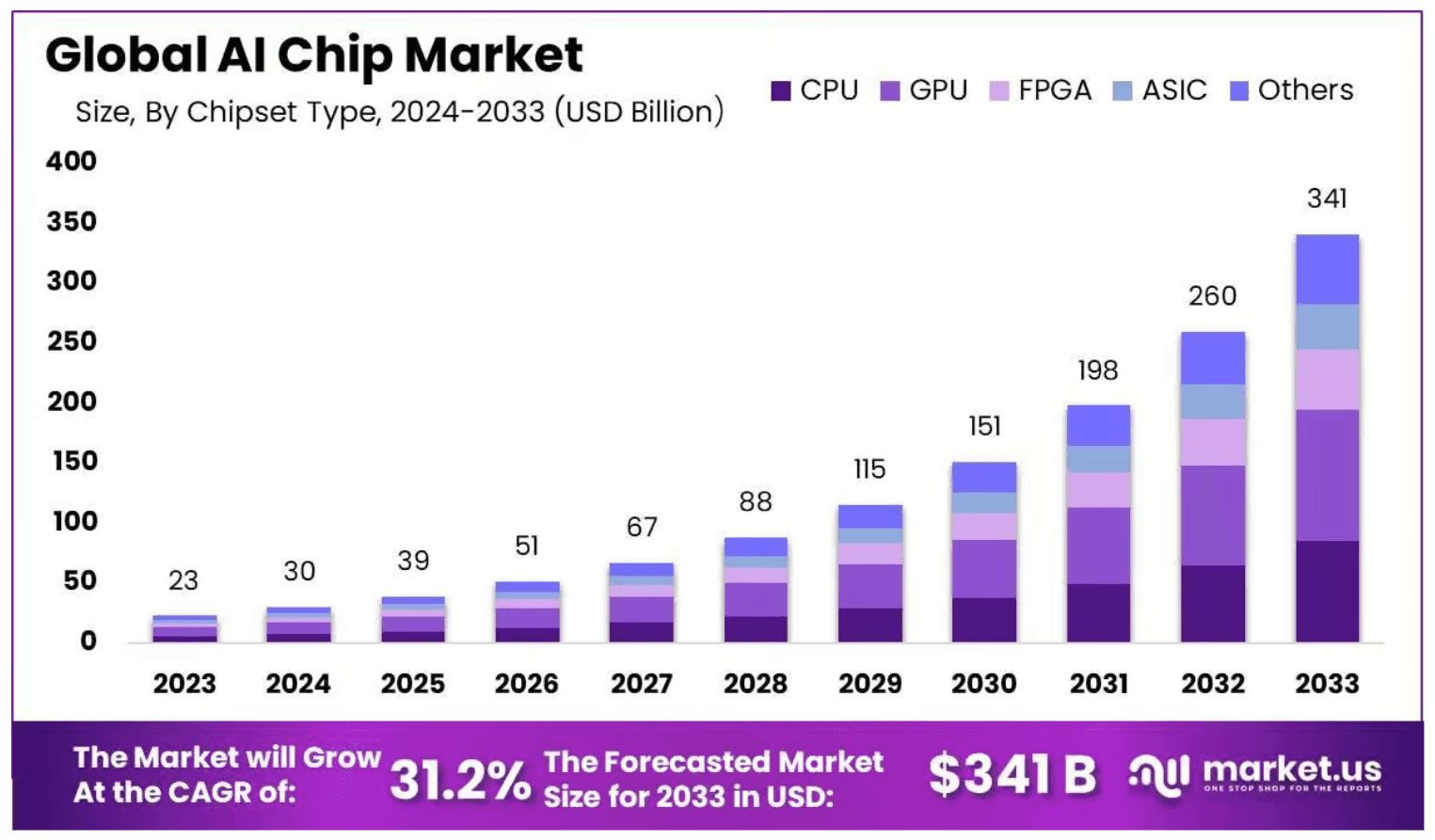

Furthermore, research into AI chip dynamics also suggests the ASIC market to continue growing at a CAGR exceeding 30% through 2033:

On paper, it looks like a classic disruption event: high-margin incumbents being chipped away at by efficient, internal, "good enough" alternatives.

But there is a fatal flaw in this logic. This bearish thesis treats Nvidia like a pure-play hardware vendor—a commodity shop selling premium silicon that can be easily swapped out. This is a profound misunderstanding of the modern AI stack. In reality, hyperscalers aren't replacing Nvidia; they are inadvertently building a massive, physical, and financial foundation that makes Nvidia’s ecosystem more entrenched than ever.

Custom silicon isn't the poison that kills Nvidia; it is the secondary infrastructure that allows Nvidia to move up the value chain. Far from being a "Nvidia killer," the rise of internal ASICs is the ultimate proof that the AI transition is still in its infancy, and that in the race for AI dominance, the only way to play is to be the platform, not just the chip.

The "Training Tax" and the Velocity Moat

The current investor consensus is built on a fundamental misunderstanding of the AI development lifecycle. The narrative goes like this: Hyperscalers are tired of paying Nvidia’s premium, so they are pouring billions into custom ASICs (Application-Specific Integrated Circuits) like Google’s TPU or AWS’s Trainium to replace the GPU.

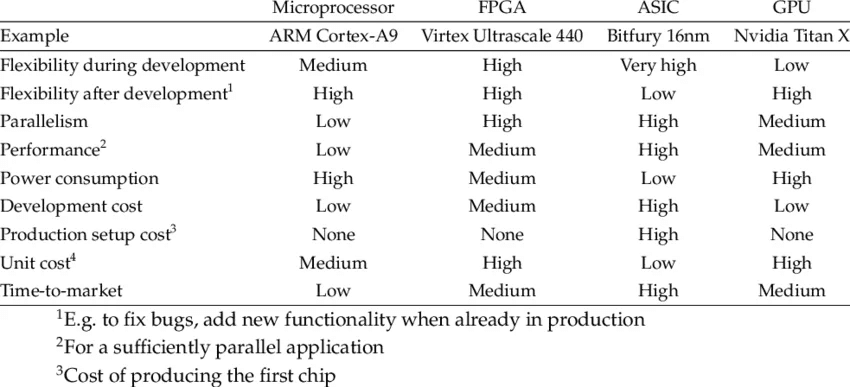

But this thesis ignores the fact that flexibility is the ultimate performance metric.

When it comes to foundation models, state-of-the-art (SOTA) is a moving target. Model architectures, activation functions, and attention mechanisms evolve every few months. When a research team makes a breakthrough that changes how a model processes data, they need hardware that can adapt instantly.

This is where the so-called "Training Tax" comes in.

Custom silicon is inherently rigid; it is hard-wired for a specific set of mathematical operations. By the time a hyperscaler finishes the 18-to-24-month cycle of designing, taping out, and deploying a custom chip, the underlying model architecture has likely shifted, rendering their "optimized" silicon inefficient or obsolete.

Nvidia, by contrast, sells programmable versatility. They aren't just selling a static brick of silicon; they are selling a massive, CUDA-optimized ecosystem that allows developers to reconfigure their entire stack via software updates.

Hyperscalers are forced to pay the "Training Tax"—they continue to buy Nvidia GPUs for their most critical training workloads because they cannot afford the cost of being "locked into" a custom chip that can’t keep up with the pace of innovation.

Essentially, Nvidia provides the velocity that hyperscalers cannot build in-house. They have created a velocity moat: as long as AI research continues to move faster than the hardware design cycle, Nvidia will remain the only viable platform for the cutting edge.

Custom Silicon: The "Utility" Play, Not the "Engine" Play

The media always loves a good old “David vs Goliath” story, which partly explains the fascination surrounding AWS’s Tranium, or Google’s TPU, as the slingshot that will take down Nvidia, which is seen as the king of the Mag Seven, along with its fortress of a moat. However, this popular sentiment overlooks the physics of data center economics.

Hyperscalers aren't building custom silicon to win the AI arms race. In fact, the real reason actually has to do with managing their electricity bill.

To understand why, you have to distinguish between the "Engine" and the "Utility."

The Commodity Shift

Nvidia is the Engine. It is high-performance, general-purpose, and designed to handle the chaotic, evolving frontier of LLM training where model architectures change every six months. Custom silicon, by contrast, is a Utility. It is designed for "Inference"—the repetitive, predictable task of running a model once it has already been trained.

When Google or Meta moves a stable workload (like photo tagging or ad ranking) onto their own chips, they aren't exactly "replacing" Nvidia. What they are doing is offloading the "boring" math into cheaper and more specialized (but limited) hardware.

The Pressure-Relief Valve

Counter-intuitively, this shift actually strengthens Nvidia’s position.

By moving low-margin, high-volume tasks to internal silicon, hyperscalers end up doing two things:

Free up Power and Rack Space: Data centers are constrained by megawatts, not just square footage. Every custom chip running a basic recommendation engine saves power that can then be redirected toward a massive, high-margin Nvidia Blackwell cluster.

Optimize the Balance Sheet: Custom chips act as a pressure-relief valve for CAPEX. If a hyperscaler tried to run every single AI task on $40,000 GPUs, the unit economics of the cloud would collapse.

The "Good Enough" Trap

Custom ASICs (Application-Specific Integrated Circuits) are rigid. They are built for the "now." In the time it takes to design, tape out, and manufacture a custom chip, the AI research world has often moved on to a new mathematical approach that the custom chip handles inefficiently.

Nvidia’s GPUs are the only hardware "liquid" enough to flow with the research. As long as AI remains an experimental, fast-moving field, the "Engine" will always be green. The custom chips will remain the "Utility"—the quiet background hum that keeps the lights on while Nvidia does the heavy lifting.

The "Platformization" of Nvidia—From Chipmaker to Data Center OS

The fatal flaw in the "Nvidia Killer" thesis is the assumption that Nvidia is still just selling a discrete piece of silicon that fits into a PCIe slot. If you view the H100 or Blackwell as a standalone product, a custom ASIC from Google or AWS looks like a viable substitute. But Nvidia hasn't been a "chip company" for years. It has evolved into the integrated backplane of the modern AI factory.

While hyperscalers are busy designing specialized "engines" (their custom chips), Nvidia has been busy building the entire "chassis"—the networking, the interconnects, and the software libraries that allow 100,000 GPUs to act as a single, coherent brain.

Custom chips are often islands. They excel at specific, isolated tasks but struggle with the massive East-West traffic required for trillion-parameter models. Nvidia’s acquisition of Mellanox and the development of InfiniBand and NVLink mean that even if a hyperscaler uses their own silicon for certain workloads, they are often still running those workloads across a fabric designed and licensed by Nvidia.

And then there is the CUDA factor, which has moved from a simple programming language to a massive library of pre-optimized "primitives" for every industry from drug discovery to climate modeling.

To use a custom AWS Trainium or Google TPU, a developer has to "translate" their work into a proprietary stack. With Nvidia NIMs (Inference Microservices), on the other hand, Nvidia has effectively created an "App Store" for AI. They provide pre-packaged, optimized containers that "just work."

So by the time a hyperscaler perfects their internal chip, Nvidia has already shifted the goalposts by integrating the chip so deeply into the Spectrum-X networking and BlueField DPU (Data Processing Unit) layers that the chip itself becomes secondary.

So overall, hyperscalers aren't building Nvidia killers; they are building "value-tier" alternatives for their most boring, stable workloads. For everything else—the frontier models that define the future—they are more locked into the Nvidia platform than ever before.

Conclusion: Trading the Volatility of the New Standard

If the "Custom Silicon" narrative has taught us anything, it’s that the market is prone to overreacting to surface-level threats while missing the deeper structural shifts. Nvidia isn't being replaced; it is being integrated into the very fabric of the global computing grid.

However, for investors, this transition won't be a straight line. Between energy constraints, hardware cycles, and the shifting correlation between Tech and Digital Assets, the "New Standard" is defined volatility.

To capture the growth of this ecosystem without being sidelined by the inevitable drawdowns, you need a strategy that moves as fast as the market does.

The Tactical Edge: AlphaFactory "Tech & Crypto"

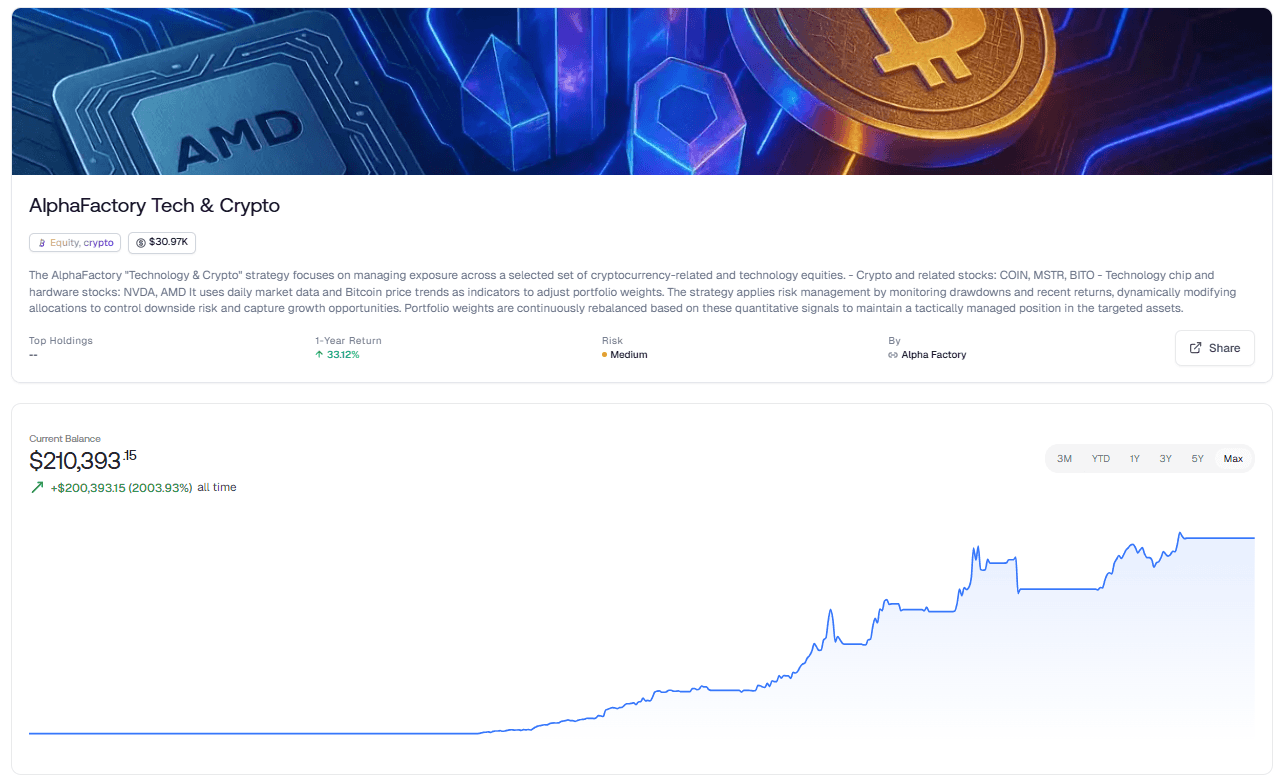

We’ve built a strategy specifically designed for this high-stakes environment. AlphaFactory’s Tech & Crypto strategy doesn’t just bet on the winners—it manages the risk of being right.

By focusing on the leaders of the AI and Digital Asset revolution, this strategy provides exposure to the core engines of the next decade:

The Hardware Titans: Strategic positions in NVDA and AMD.

The Crypto Pioneers: Exposure to COIN, MSTR, and BITO.

Rather than static allocation, AlphaFactory uses daily market data and Bitcoin price trends as quantitative signals. When the macro environment shifts or drawdowns hit a specific threshold, the strategy dynamically modifies portfolio weights to protect your capital. It is built to capture the explosive upside of the "Nvidia Era" while actively managing the downside risk that comes with such transformative tech.

Don't just watch the shift happen—trade it with precision now.

NEVER MISS A THING!

Subscribe and get freshly baked articles. Join the community!

Join the newsletter to receive the latest updates in your inbox.

Related post

April 14, 2026

March 17, 2026